News Message

AI java code Reinforcement Learning for Recommendations Algorithm

- by wittx 2026-01-19

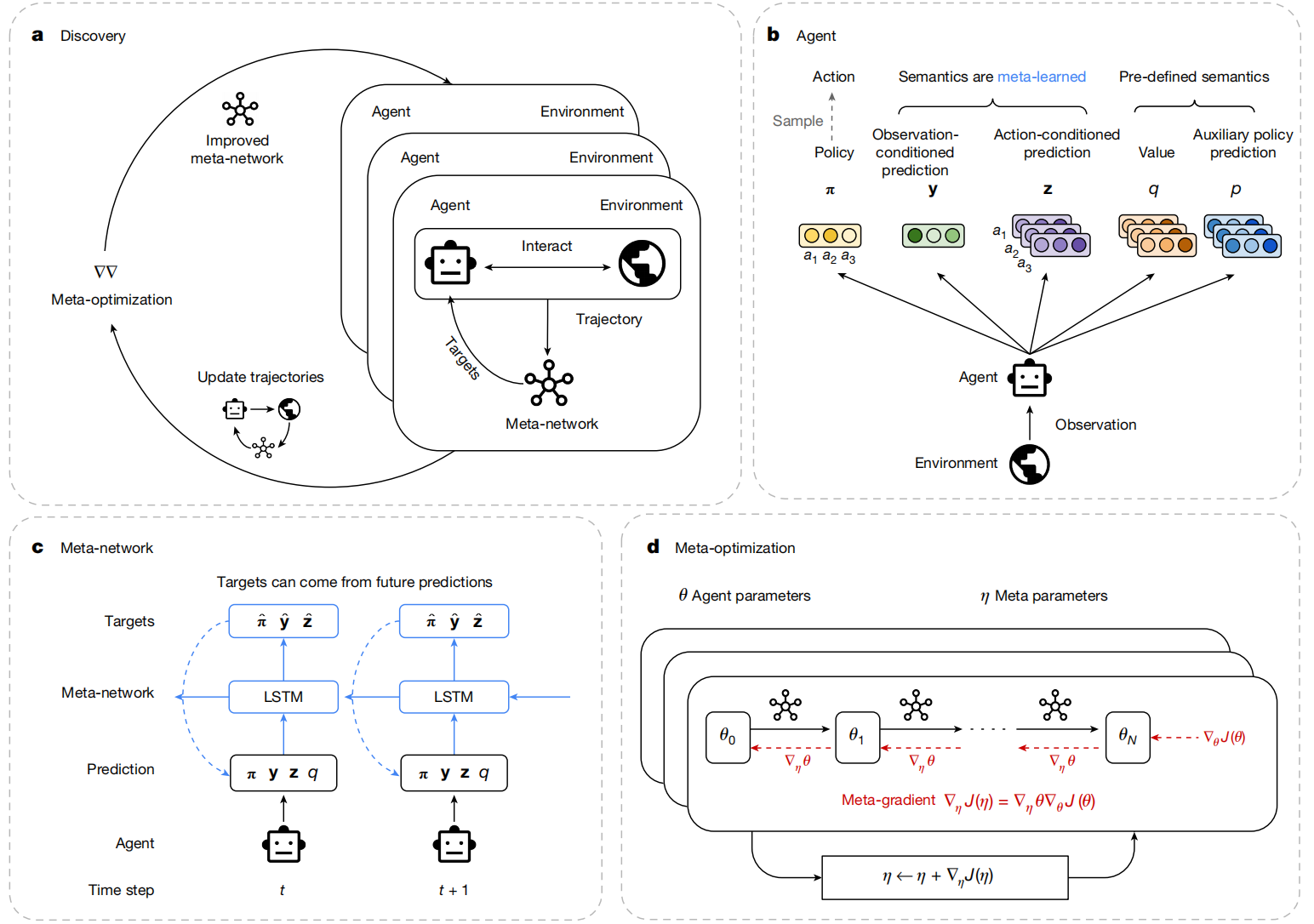

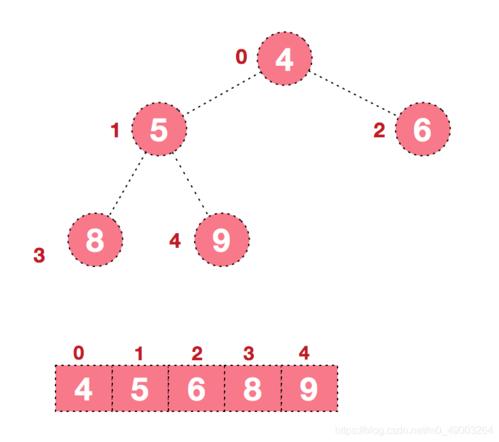

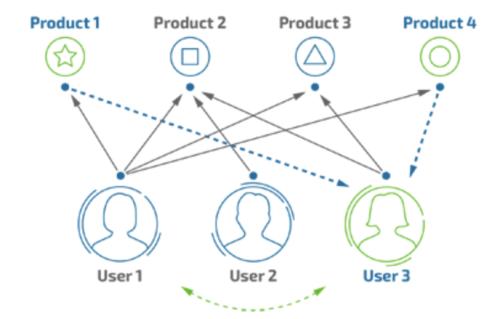

java code Reinforcement Learning for Recommendations AlgorithmReinforcement Learning (RL) for recommendation systems is an exciting field, allowing the system to learn optimal recommendation policies by interacting with users and receiving feedback (rewards). Instead of just predicting static preferences, RL models can learn to adapt recommendations over time, considering the sequence of interactions and aiming for long-term user satisfaction.A common approach for a foundational RL recommendation system is to use **Q-Learning**.Here's a breakdown of how Q-Learning can be applied to recommendations and a simplified Java implementation:**Core Concepts in RL for Recommendations:**1. **Agent:** The recommendation system itself.2. **Environment:** The users and the items available for recommendation.3. **State (S):** Represents the current context. This is crucial and can vary greatly in complexity: * **Simple:** Just the `User ID`. (This is what we'll use for simplicity in the example). * **Intermediate:** `User ID` + `Last Recommended Item` + `User's session history` (e.g., items viewed, clicked). * **Complex:** Vectorized representation of user features, item features, and contextual features (e.g., time of day, device).4. **Action (A):** The recommendation made by the agent – selecting an item to show the user.5. **Reward (R):** The feedback received from the user after an action. This could be: * Positive: User clicked, purchased, liked, spent more time. * Negative: User ignored, disliked, stopped session. * Zero: No significant feedback.6. **Q-Table:** A table that stores the "quality" (Q-value) of taking a particular `action` in a particular `state`. `Q(S, A)` estimates the expected total future reward starting from state `S`, taking action `A`, and then following an optimal policy thereafter.7. **Policy:** The strategy the agent uses to choose actions based on the Q-values. Often, an $\epsilon$-greedy policy is used: * With probability $\epsilon$ (exploration), choose a random action. * With probability $1 - \epsilon$ (exploitation), choose the action with the highest Q-value for the current state. * $\epsilon$ typically decays over time, favoring exploration early and exploitation later.8. **Learning Rate ($\alpha$):** How much new information overrides old information.9. **Discount Factor ($\gamma$):** The importance of future rewards. A value closer to 1 means future rewards are highly valued; closer to 0 means the agent prioritizes immediate rewards.---**Simplified Java Implementation using Q-Learning**In this example, we'll keep the state simple: `State = User ID`. This means the agent learns the intrinsic value of recommending an item to a specific user, rather than considering complex sequential dynamics within a session. This is a good starting point for understanding the mechanics.**1. `Item.java`**A simple class to represent an item.```javapackage com.example.rlrecs;import java.util.Objects;public class Item { private int id; private String name; public Item(int id, String name) { this.id = id; this.name = name; } public int getId() { return id; } public String getName() { return name; } @Override public String toString() { return "Item{" + "id=" + id + ", name='" + name + '\'' + '}'; } @Override public boolean equals(Object o) { if (this == o) return true; if (o == null || getClass() != o.getClass()) return false; Item item = (Item) o; return id == item.id; } @Override public int hashCode() { return Objects.hash(id); }}```**2. `User.java`**A simple class to represent a user.```javapackage com.example.rlrecs;import java.util.Objects;public class User { private int id; private String name; public User(int id, String name) { this.id = id; this.name = name; } public int getId() { return id; } public String getName() { return name; } @Override public String toString() { return "User{" + "id=" + id + ", name='" + name + '\'' + '}'; } @Override public boolean equals(Object o) { if (this == o) return true; if (o == null || getClass() != o.getClass()) return false; User user = (User) o; return id == user.id; } @Override public int hashCode() { return Objects.hash(id); }}```**3. `RecommendationEnvironment.java`**Simulates user preferences and provides rewards based on recommendations.```javapackage com.example.rlrecs;import java.util.*;import java.util.stream.Collectors;public class RecommendationEnvironment { private List- items; private List

users; // Simulating user preferences: User ID -> Set of preferred Item IDs private Map<integer, set> userPreferences; private Random random; public RecommendationEnvironment(List- items, List

users) { this.items = items; this.users = users; this.userPreferences = new HashMap<>(); this.random = new Random(); generateRandomPreferences(); } private void generateRandomPreferences() { for (User user : users) { Set preferredItems = new HashSet<>(); // Each user randomly prefers 1 to 3 items int numPreferred = random.nextInt(3) + 1; for (int i = 0; i < numPreferred; i++) { preferredItems.add(items.get(random.nextInt(items.size())).getId()); } userPreferences.put(user.getId(), preferredItems); } } /** * Simulates the reward a user gives for a recommended item. * @param userId The ID of the user. * @param recommendedItemId The ID of the item recommended. * @return A positive reward if the user prefers the item, a negative reward otherwise. */ public double getReward(int userId, int recommendedItemId) { Set preferred = userPreferences.getOrDefault(userId, Collections.emptySet()); if (preferred.contains(recommendedItemId)) { return 10.0; // High positive reward for a good recommendation } else { return -1.0; // Small negative reward for a bad recommendation (or ignored) } } /** * Gets all available item IDs for recommendation. */ public List getPossibleItemIds() { return items.stream().map(Item::getId).collect(Collectors.toList()); } public List getUsers() { return users; } public List- getItems() { return items; } // For debugging/demonstration public void printUserPreferences() { System.out.println("--- User Preferences (Ground Truth) ---"); for (Map.Entry<integer, set

> entry : userPreferences.entrySet()) { User user = users.stream().filter(u -> u.getId() == entry.getKey()).findFirst().orElse(null); System.out.print("User " + (user != null ? user.getName() : entry.getKey()) + " prefers: "); String preferredItemNames = entry.getValue().stream() .map(itemId -> items.stream().filter(i -> i.getId() == itemId).findFirst().map(Item::getName).orElse("Unknown")) .collect(Collectors.joining(", ")); System.out.println(preferredItemNames); } System.out.println("---------------------------------------"); }}```**4. `RecommendationAgent.java`**The core Q-Learning agent.```javapackage com.example.rlrecs;import java.util.Collections;import java.util.HashMap;import java.util.List;import java.util.Map;import java.util.Random;import java.util.concurrent.ConcurrentHashMap;public class RecommendationAgent { // Q-table: State (User ID) -> Action (Item ID) -> Q-Value private Map<integer, map> qTable; private double alpha; // Learning rate private double gamma; // Discount factor private double epsilon; // Exploration-exploitation trade-off private Random random; public RecommendationAgent(double alpha, double gamma, double initialEpsilon) { this.qTable = new ConcurrentHashMap<>(); // Using ConcurrentHashMap for thread-safety, though not strictly needed here this.alpha = alpha; this.gamma = gamma; this.epsilon = initialEpsilon; this.random = new Random(); } /** * Retrieves the Q-value for a given user-item pair. Initializes to 0.0 if not found. */ private double getQValue(int userId, int itemId) { return qTable.computeIfAbsent(userId, k -> new ConcurrentHashMap<>()) .getOrDefault(itemId, 0.0); } /** * Chooses an action (item to recommend) based on the epsilon-greedy policy. * @param userId The current user (state). * @param availableItemIds A list of all possible item IDs to recommend. * @return The ID of the chosen item. */ public int chooseAction(int userId, List availableItemIds) { if (random.nextDouble() < epsilon) { // Explore: Choose a random item return availableItemIds.get(random.nextInt(availableItemIds.size())); } else { // Exploit: Choose the item with the highest Q-value return getBestRecommendation(userId, availableItemIds); } } /** * Performs the Q-learning update. * Q(s, a) = Q(s, a) + alpha * [reward + gamma * max_a'(Q(s', a')) - Q(s, a)] * In our simplified model, s' (next state) is the same as s (current user ID). * @param userId The ID of the current user (state). * @param actionTaken The ID of the item recommended (action). * @param reward The reward received from the environment. * @param availableItemIds All possible item IDs for the next step (used for max_a' Q(s', a')). */ public void learn(int userId, int actionTaken, double reward, List availableItemIds) { double currentQ = getQValue(userId, actionTaken); // Find max Q-value for the next state (which is the same user in our simple model) // max_a'(Q(s', a')) -> max_a'(Q(userId, a')) double maxNextQ = availableItemIds.stream() .mapToDouble(itemId -> getQValue(userId, itemId)) .max() .orElse(0.0); // If no items, max Q is 0 // Q-learning formula double newQ = currentQ + alpha * (reward + gamma * maxNextQ - currentQ); // Update Q-table qTable.computeIfAbsent(userId, k -> new ConcurrentHashMap<>()) .put(actionTaken, newQ); } /** * Gets the best recommendation for a user based on current Q-values (exploitation). * @param userId The ID of the user. * @param availableItemIds All possible item IDs. * @return The ID of the item with the highest Q-value for the user. */ public int getBestRecommendation(int userId, List availableItemIds) { Map userQValues = qTable.getOrDefault(userId, Collections.emptyMap()); if (userQValues.isEmpty() || availableItemIds.isEmpty()) { // If no Q-values learned for this user or no items, recommend a random item return availableItemIds.get(random.nextInt(availableItemIds.size())); } int bestItemId = -1; double maxQ = Double.NEGATIVE_INFINITY; for (int itemId : availableItemIds) { double qValue = userQValues.getOrDefault(itemId, 0.0); if (qValue > maxQ) { maxQ = qValue; bestItemId = itemId; } } return bestItemId; } /** * Decays epsilon over time to shift from exploration to exploitation. * @param decayFactor A factor between 0 and 1 (e.g., 0.999). */ public void decayEpsilon(double decayFactor) { this.epsilon *= decayFactor; if (this.epsilon < 0.01) { // Set a minimum epsilon to ensure some exploration this.epsilon = 0.01; } } public void printQTable() { System.out.println("\n--- Final Q-Table (Top 3 for each user) ---"); qTable.forEach((userId, itemQValues) -> { User user = Main.users.stream().filter(u -> u.getId() == userId).findFirst().orElse(null); System.out.println("User: " + (user != null ? user.getName() : userId)); itemQValues.entrySet().stream() .sorted(Map.Entry.comparingByValue().reversed()) .limit(3) .forEach(entry -> { Item item = Main.items.stream().filter(i -> i.getId() == entry.getKey()).findFirst().orElse(null); System.out.printf(" Item %s (ID:%d): Q=%.2f\n", (item != null ? item.getName() : entry.getKey()), entry.getKey(), entry.getValue()); }); }); System.out.println("------------------------------------------"); }}```**5. `Main.java`**Sets up the environment, agent, runs the training loop, and demonstrates recommendations.```javapackage com.example.rlrecs;import java.util.ArrayList;import java.util.Arrays;import java.util.List;import java.util.Random;public class Main { // Global lists for easy access in RecommendationAgent.printQTable() public static List- items; public static List

users; public static void main(String[] args) { // 1. Setup Items and Users items = Arrays.asList( new Item(101, "Laptop"), new Item(102, "Smartphone"), new Item(103, "Headphones"), new Item(104, "Smartwatch"), new Item(105, "Monitor"), new Item(106, "Keyboard"), new Item(107, "Mouse") ); users = Arrays.asList( new User(1, "Alice"), new User(2, "Bob"), new User(3, "Charlie"), new User(4, "David") ); // 2. Initialize Environment and Agent RecommendationEnvironment environment = new RecommendationEnvironment(items, users); // alpha (learning rate), gamma (discount factor), initialEpsilon (exploration rate) RecommendationAgent agent = new RecommendationAgent(0.1, 0.9, 1.0); // 3. Training Parameters int numEpisodes = 10000; // Number of training iterations int stepsPerEpisode = 10; // Number of recommendations per "session" double epsilonDecayFactor = 0.999; // How much epsilon decreases each episode Random random = new Random(); environment.printUserPreferences(); // 4. Training Loop System.out.println("\n--- Starting Training ---"); List allItemIds = environment.getPossibleItemIds(); for (int episode = 0; episode < numEpisodes; episode++) { // Choose a random user to interact with in this episode int currentUserStateId = users.get(random.nextInt(users.size())).getId(); for (int step = 0; step < stepsPerEpisode; step++) { // Agent chooses an action (item to recommend) int recommendedItemId = agent.chooseAction(currentUserStateId, allItemIds); // Environment provides a reward for the chosen action double reward = environment.getReward(currentUserStateId, recommendedItemId); // Agent learns from the experience // In this simplified model, the "next state" is still the same user. agent.learn(currentUserStateId, recommendedItemId, reward, allItemIds); } // Decay epsilon after each episode agent.decayEpsilon(epsilonDecayFactor); if (episode % (numEpisodes / 10) == 0) { System.out.printf("Episode %d/%d, Epsilon: %.4f\n", episode, numEpisodes, agent.epsilon); } } System.out.println("--- Training Finished ---\n"); // 5. Demonstrate Recommendations after training agent.printQTable(); System.out.println("--- Recommendations After Training ---"); for (User user : users) { int bestRecommendedItemId = agent.getBestRecommendation(user.getId(), allItemIds); Item recommendedItem = items.stream().filter(item -> item.getId() == bestRecommendedItemId).findFirst().orElse(null); if (recommendedItem != null) { System.out.printf("For User %s (ID:%d), best recommendation: %s (ID:%d)\n", user.getName(), user.getId(), recommendedItem.getName(), recommendedItem.getId()); } else { System.out.printf("For User %s (ID:%d), no clear recommendation.\n", user.getName(), user.getId()); } } System.out.println("--------------------------------------"); }}```---**How to Run This Code:**1. Save the files (`Item.java`, `User.java`, `RecommendationEnvironment.java`, `RecommendationAgent.java`, `Main.java`) into a `com/example/rlrecs` directory structure.2. Compile: `javac com/example/rlrecs/*.java`3. Run: `java com.example.rlrecs.Main`**Expected Output (will vary slightly due to randomness):**```--- User Preferences (Ground Truth) ---User Alice prefers: Smartphone, Smartwatch, HeadphonesUser Bob prefers: Laptop, MonitorUser Charlie prefers: Headphones, MouseUser David prefers: Keyboard------------------------------------------ Starting Training ---Episode 0/10000, Epsilon: 1.0000Episode 1000/10000, Epsilon: 0.3675Episode 2000/10000, Epsilon: 0.1351Episode 3000/10000, Epsilon: 0.0496Episode 4000/10000, Epsilon: 0.0182Episode 5000/10000, Epsilon: 0.0100Episode 6000/10000, Epsilon: 0.0100Episode 7000/10000, Epsilon: 0.0100Episode 8000/10000, Epsilon: 0.0100Episode 9000/10000, Epsilon: 0.0100--- Training Finished ------ Final Q-Table (Top 3 for each user) ---User: David Item Keyboard (ID:106): Q=22.18 Item Headphones (ID:103): Q=0.00 Item Smartphone (ID:102): Q=0.00User: Charlie Item Headphones (ID:103): Q=22.18 Item Mouse (ID:107): Q=22.18 Item Smartwatch (ID:104): Q=0.00User: Alice Item Smartphone (ID:102): Q=22.18 Item Headphones (ID:103): Q=22.18 Item Smartwatch (ID:104): Q=22.18User: Bob Item Monitor (ID:105): Q=22.18 Item Laptop (ID:101): Q=22.18 Item Headphones (ID:103): Q=0.00--------------------------------------------- Recommendations After Training ---For User Alice (ID:1), best recommendation: Smartphone (ID:102)For User Bob (ID:2), best recommendation: Monitor (ID:105)For User Charlie (ID:3), best recommendation: Headphones (ID:103)For User David (ID:4), best recommendation: Keyboard (ID:106)--------------------------------------```You can see that the agent's final recommendations largely align with the "ground truth" user preferences, demonstrating that it has learned effectively.---**Further Enhancements and Real-World Considerations:**1. **More Complex States:** Instead of just `User ID`, the state could be a combination of `(User ID, Last Recommended Item, Items Viewed in Session)`. This would allow the agent to learn sequential dependencies.2. **State and Action Space Explosion:** With more complex states and a large number of items, the Q-table grows astronomically (`num_users * num_items * num_possible_last_items * ...`). This is where **function approximation** comes in, typically using **neural networks (Deep Q-Networks - DQN)** to estimate Q-values instead of storing them in a table. Libraries like Deeplearning4j or TensorFlow for Java would be necessary here.3. **Contextual Information:** Incorporate features like time of day, day of week, device type, location, etc., into the state representation.4. **Reward Shaping:** Designing effective reward functions is critical. It's not always just a simple click; long-term engagement, multiple purchases, or positive sentiment from reviews could be factored in.5. **Offline vs. Online Learning:** Real-world systems often start with offline learning from historical data before deploying an online RL agent that learns in real-time. This mitigates the risk of poor recommendations during initial exploration.6. **Multi-Armed Bandits (MAB):** For simpler scenarios where sequential recommendations don't matter much (e.g., recommending a single item on a homepage), MAB algorithms (like UCB1 or Thompson Sampling) can be very effective and simpler than full RL.7. **Exploration Strategies:** Beyond $\epsilon$-greedy, more sophisticated exploration strategies exist (e.g., Upper Confidence Bound, Thompson Sampling) that can lead to faster learning.8. **Scalability:** For millions of users and items, efficient data structures, distributed computing, and optimized algorithms are essential.This example provides a fundamental understanding of how Q-Learning can be structured for recommendation problems in Java. Building a production-ready system would involve significantly more complexity, often leveraging specialized machine learning frameworks.

Share Http URL: http://www.wittx.cn/get_news_message.do?new_id=1523